Integrate AI into marketing measurement, attribution models, and incrementality testing to improve budget decisions and boost campaign performance.

Quick Answer:

AI improves marketing measurement by connecting forecasting, incrementality testing, and first-party data directly to planning and budget decisions.

It helps teams validate which channels drive real impact, update models faster, and adjust spend while campaigns are running. Instead of relying on static reports, organizations use AI to link marketing KPIs to business outcomes and maintain accuracy in privacy-first conditions.

Marketing measurement in 2026 requires structural integration of AI into core workflows.

This article explains how to:

If your marketing reports produce conflicting signals and your finance team keeps asking questions you can’t confidently answer, the root cause usually sits in the measurement architecture, not in the data itself.

When these components align, decisions are based on validated impact, and AI becomes part of core business operations.

Marketing measurement is under structural pressure. Customer journeys move across devices and platforms before conversion, which weakens session-based attribution and single-touch logic. Google research shows that consumers frequently switch devices during the purchase process, making linear models increasingly limited.

At the same time, AI is already generating measurable economic impact in commercial functions. Marketing and sales rank among the business areas with the highest value potential from AI integration, based on global research into enterprise AI adoption.

Measurement maturity remains uneven. Only 19% of companies as advanced in marketing measurement capabilities. In most companies, models sit apart, KPIs aren’t aligned, and experimentation happens rarely or without structure.

This gap creates real operational risk. Budget decisions are still based on models built for simpler channel setups. Marketing mix modeling, multi-touch attribution, and incrementality testing each answer important questions, but when they run separately, budget decisions rely on partial information. Combining these approaches gives teams a clearer view of long-term impact and day-to-day performance.

As AI becomes embedded into operational systems, measurement shifts from static reporting cycles to continuously updated analytical environments. The question is no longer whether to introduce AI into measurement, but how to integrate it into workflows, validation processes, and decision governance.

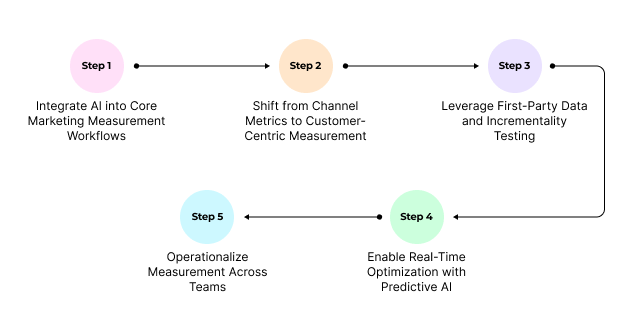

The following five steps outline how to strengthen marketing measurement strategy through AI integration, experimentation discipline, and cross-functional alignment.

Marketing measurement strengthens when AI is embedded into daily analytical routines. Integration connects models, shortens refresh cycles, and strengthens decision control.

Each method answers a different analytical question. When coordinated, they support both strategic planning and execution-level optimization.

AI connects model inputs and outputs so that insights reinforce each other. User-level interaction patterns inform MMM calibration, while aggregated modeling improves how attribution assigns weight. Lift results from experiments are then fed back into the system, refining allocation logic over time.

When these elements work together, performance signals become more consistent across teams. Optimization decisions gain confidence. The distinction between short-term response and long-term brand impact becomes clearer.

Attribution models adjust as interaction patterns change, reflecting how engagement influences outcomes. Incrementality testing runs continuously. Geo-based and holdout tests bring real performance results back into the model, improving budget allocation without interrupting active campaigns.

Campaigns running across multiple channels and audience segments benefit from regular recalibration. Evaluating the full interaction sequence reduces the risk of overinvesting in channels that generate clicks but do not drive revenue.

Unstructured data accounts for over 80% of enterprise information, and preparing it for modeling pipelines requires structural work before any algorithm can operate reliably. Information is spread between on-premises storage, cloud systems, analytics platforms, and campaign tools. Each source follows its own naming logic, hierarchy, and metadata conventions. When these inputs get combined without standardization, the model starts learning from mismatched information.

This is where AI-powered data ingestion becomes relevant. Modern ingestion systems infer schema relationships, reconcile naming conflicts, and align hierarchies before data enters modeling environments. Infrastructure platforms such as AWS Glue and Confluent support automated schema detection during ingestion, while tools like Airbyte and Informatica apply machine learning to standardize records across systems. Automation isn't the main goal because the priority is consistency you can actually trust.

When ingestion stabilizes, model behavior changes.

Refresh cycles compress because preprocessing friction declines. Enterprise measurement environments illustrate this shift. Adobe Mix Modeler, for example, processes live campaign inputs and delivers updated insights within hours, allowing teams to evaluate performance signals while campaigns remain active.

In practical terms, this supports:

Quality control becomes embedded into the ingestion layer itself. Validation frameworks such as AWS Glue Data Quality review incoming records before they influence attribution or forecasting logic. Monitoring systems including StreamSets detect irregular pipeline behavior through automated checks, reducing the likelihood of distorted weighting or unstable model outputs.

Error management follows the same principle. Streaming environments such as Google Dataflow apply predictive diagnostics to detect ingestion failures early. Platforms like Talend provide automated resolution guidance for recurring integration issues. Monitoring suites including Datadog track pipeline health and flag anomalies in processing workloads. These mechanisms protect modeling stability without interrupting active measurement cycles.

Ingestion, validation, and monitoring operate as one coordinated system. Updates happen on a continuous basis, keeping models aligned with current performance signals. Budget decisions reflect real activity, and planning cycles compress because input quality remains stable and controlled.

Workload orchestration also adapts. Services such as AWS Glue Workload Management scale compute resources based on processing complexity, and integration platforms like Airbyte optimize resource allocation during volume spikes. This flexibility helps ingestion remain stable even when data volume changes.

Stable ingestion keeps model behavior predictable and supports budget decisions grounded in current performance.

Many marketing teams still rely on channel dashboards and session summaries. This structure simplifies reporting, but it fragments behavior. Customers do not move in isolated visits. Their decisions unfold over time, involve multiple devices, and combine paid, owned, and organic interactions. When performance is evaluated per channel, decision logic becomes partial.

You’ve probably measured marketing performance using session-based models. These models group activity into time-bound visits. They show what happened during a session but disconnect interactions that occur days or weeks apart.

Customer decisions rarely fit into isolated sessions. Someone might first discover your product through social media, return via search, open an email, and convert later from a direct visit. Each touchpoint influences the outcome, even if it appears in a different session or on another device.

The shift toward event-based attribution reflects how people actually behave. Google Analytics 4 formalized this transition by replacing session-first logic with an event-driven structure. Interactions are recorded as continuous behavioral signals, not isolated visits.

This changes how influence is evaluated.

Instead of asking which channel “won” the conversion, measurement examines how interactions contribute within the full sequence. Early engagement, repeated exposure, and assisted touchpoints regain analytical weight.

Event-based models allow you to:

When interaction sequences guide evaluation, budget allocation aligns with behavioral progression. Attribution becomes less reactive and more structurally grounded.

Privacy pressure also accelerates this shift. Event-based frameworks rely on first-party signals and authenticated interactions, supporting continuity as third-party tracking declines.

Multi-touch attribution helps restore that sequence. Value is distributed along the path in proportion to observed influence, so early engagement, mid-funnel interaction, and final conversion steps are interpreted as part of one progression. Budget allocation and channel optimization then rely on structured journey logic.

Customer-centric measurement depends on alignment. Interaction signals need to come together in a single journey view, where device activity connects to one identifiable user and online and offline inputs follow the same evaluation logic. This allows influence to be interpreted in the context of the full interaction path.

With the entire sequence visible, performance can be evaluated based on how each interaction moves a user closer to conversion. Budget decisions become more grounded, and investment shifts toward channels that genuinely support progression.

As Marc Pritchard, Chief Brand Officer of P&G, stated: "There is no reason we shouldn't be able to fix this problem and make media measurable across every platform."

Incrementality testing answers a practical question: what changes when marketing activity changes?

In complex channel setups, attribution models or MMM alone cannot fully explain cause and effect. Controlled experiments help validate whether measurement reflects real impact. They also allow evaluation within privacy constraints.

"Incrementality is considered the gold standard for causal inference, providing actionable insights into which media investments are genuinely moving the needle." — Measured

Geo-based incrementality testing is a controlled experiment that measures how marketing activity changes outcomes in exposed regions versus matched control regions, without requiring individual user tracking.

Some regions receive marketing exposure, others do not. Performance differences between these groups indicate incremental lift.

Because analysis happens at the regional level, personal user tracking is not required. This makes geo testing structurally aligned with privacy-focused measurement.

For results to be reliable, treatment and control regions must be closely matched before the test begins. In practice, this often requires ≥95% historical correlation on primary KPIs. Alignment usually includes:

Most geo tests require 12–24 months of daily historical data to produce reliable results. The target metric must be defined precisely. A concrete outcome such as “incremental funded accounts” gives teams a clearer signal than a broad label like “performance.”

Modern geo lift analysis uses regression-based methods to compare exposed regions with control groups. The objective is to estimate incremental impact and understand the likely range of outcomes.

Well-structured geo experiments serve as validation points for attribution and MMM. They help confirm that modeled impact aligns with real market behavior.

Conversion lift studies such as geo experiments, holdout tests, and randomized control trials provide a direct view of causal impact. They show how performance changes when exposure changes under controlled conditions. For this reason, they serve as a reference point for evaluating model accuracy.

Marketing Mix Modeling needs to align with observed lift. If a model estimates incremental contribution, that estimate should reflect impact confirmed through controlled testing. When multiple MMM specifications show similar statistical fit but produce different contribution estimates, incrementality testing helps identify which version reflects actual market response.

Bayesian MMM frameworks let you feed real experimental results back into your model assumptions. So what you're measuring stays grounded in what's actually happening in market. If controlled tests and MMM outputs conflict, the discrepancy usually indicates structural issues in the model, such as incorrect lag assumptions, saturation curves, or channel interaction effects. Reviewing these elements improves alignment between modeled contribution and real impact.

Out-of-sample validation checks how the model performs on new data. The model is trained on one time period and tested on the next. If results remain stable, forecasts can be trusted. This step reduces the risk of overfitting and improves planning confidence.

Andrew Covato, Founder & CEO of Growth By Science: "Experimentation without MMM is blind. MMM without experimentation is guesswork. Attribution without either? You're just paying platforms to lie to you with pretty dashboards."

MMM and incrementality testing answer different business questions. MMM helps understand overall media impact over time. Controlled experiments show the effect of specific actions. Used together, they keep contribution estimates tied to observed results and help teams adjust decisions based on real market response.

First-party data is the base of today’s measurement systems. As third-party identifiers fade and regulation tightens, consent-based interaction data becomes the primary source of insight. When handled correctly, it stabilizes models and makes attribution reflect real customer behavior.

Effective use of first-party signals requires operational discipline. Customers must understand what is collected, how it is used, and how it is protected. Transparent consent flows, clearly defined data policies, and secure storage practices function as embedded components of the measurement architecture.

A sustainable approach depends on value exchange. When customers see tangible benefit in sharing data through relevant communication, tailored offers, or improved service, signal quality improves and modeling reliability increases.

From an analytical standpoint, first-party signals expand attribution logic. Behavioral sequences, repeated engagement, and assisted influence can be incorporated into evaluation models. Budget allocation follows verified interaction history and progression patterns instead of isolated conversion events.

Operationally, privacy-first measurement relies on three components:

With consent integrated into attribution logic and signal quality controlled at the source, models produce consistent outputs, giving teams a reliable basis for budget decisions.

Privacy-first measurement should not be treated as a constraint to work around. It serves as a condition for long-term model reliability. When consent is embedded into your data architecture, changes in tracking rules no longer require constant intervention.

Marketing teams used to wait for reports before adjusting budgets. Predictive AI shortens that cycle. Instead of reacting after a campaign ends, teams can see where results are heading and adjust spend or channel mix while activity is still live.

Predictive models bring planning and evaluation closer together. Historical results, behavioral signals, and market context help estimate what is likely to happen next. Budget and channel decisions are guided by expected outcomes, not only by past reports.

AI can surface patterns that manual review often misses. Shifts in engagement, early audience response to messaging, and changes in demand become visible sooner. Teams can reallocate spend while momentum is building instead of waiting for post-campaign analysis.

Predictive modeling also supports demand detection, message refinement, and media delivery optimization based on user behavior and intent. Planning becomes structured around expected impact, with adjustments informed by updated signals during execution.

AI-powered forecasting scales this logic. Models process large variable sets simultaneously, identifying interaction effects that are difficult to isolate manually. This strengthens channel mix planning, lead prioritization, and budget sequencing decisions.

Optimization becomes continuous when predictive models integrate directly into performance monitoring. Instead of reviewing results after campaigns close, teams evaluate signal shifts as they appear and refine execution in shorter cycles.

A structured loop emerges: performance observation, modeled insight, tactical adjustment, and controlled validation. The objective is to make learning part of execution and planning cycles.

In practice, this approach has delivered significant impact. One global brand reported that its automated test-and-learn framework generated $42 million in incremental value within a single market.

AI refines experimentation logic. Traffic allocation adapts as performance signals stabilize. Learning accumulates within the system instead of restarting with each campaign. Optimization becomes embedded in execution workflows.

Predictive measurement compresses planning cycles. Instead of manually consolidating performance inputs from multiple systems, models process structured signals and generate forward projections in shorter time frames.

Budget allocation responds to observed demand shifts. Channels with stronger modeled impact receive incremental investment. Underperforming placements are adjusted before inefficiencies compound.

Predictive AI supports planning through:

AI identifies which accounts are gaining momentum, showing early interest, or losing attention. Budget allocation follows these signals.

When predictive evaluation becomes part of measurement, planning discussions shift. Teams compare scenarios before reallocating spend, and adjustments happen while campaigns are still active.

AI-driven performance evaluation loses impact when it remains confined to the marketing team. The CMO who can walk into a budget review with modeled scenarios is the one who keeps their budget intact.

More than half of best-in-class measurement professionals share effectiveness insights directly with leadership, positioning performance analysis as part of broader business decision-making.

Alignment with finance begins before budget approval. It starts with shared questions. What are we testing? What outcome will change allocation decisions? How will results influence planning assumptions?

A structured learning agenda clarifies these points in advance. Experiments are defined jointly. Success metrics are agreed upon upfront. Outcomes feed directly into budget scenarios. This reduces defensive discussions and replaces them with evidence-based dialogue.

Collaboration works when finance joins hypothesis design, test planning, and reviews. Media investments become experiments with clear learning goals. Planning shifts from justifying spend to adjusting allocation.

Performance indicators must connect to business priorities. Each goal needs a focused set of key performance indicators that show operational impact. Metrics that don't influence decisions waste attention.

Quarterly business reviews provide structure. They check progress against targets and determine whether budget allocation needs adjustment. Monthly monitoring of sensitive indicators catches issues early without fragmenting strategy.

Integrated dashboards consolidate signals into a single view. Automated reporting reduces manual reconciliation and improves coordination across teams. When leadership sees the same inputs as marketing and finance, alignment strengthens

If teams don’t trust the model, they won’t use it for budget decisions. People need to see what goes in, how weighting works, and why allocation shifts.

Transparent AI practices make that visible. Assumptions are documented. Allocation logic can be reviewed. Bias is identified early. The model stops being a black box and becomes part of the working process.

When data sources, governance rules, and validation logic are clearly defined, marketing and finance operate from the same baseline. Results can be questioned and refined. Updates reflect how the business actually runs.

With shared KPIs and explicit modeling standards, insights move directly into planning and allocation. Decisions rely on logic teams understand and can defend.

When models, experiments, and planning cycles run separately, signals conflict and decisions slow down.

Darwin designs and operationalizes:

If your current setup produces conflicting reports or slow budget decisions, the issue lies in system design.

Q1. How does AI improve marketing measurement?

AI connects modeling, attribution, and experimentation into one system. Models update on current inputs, attribution reflects full interaction paths, and budget decisions rely on validated performance signals.

Q2. Why shift to customer-centric measurement?

Channel-level reporting fragments behavior. A journey-based view connects search, paid media, email, direct visits, and offline signals into one progression, allowing evaluation of how interactions influence movement toward conversion.

Q3. Why is first-party data critical today?

Consent-based interaction signals provide stable inputs for attribution and modeling. As third-party tracking declines, first-party data supports continuity in evaluation and improves alignment between marketing activity and revenue outcomes.

Q4. How does AI reduce planning cycles?

Model refresh cycles shorten. Forecasting updates as new signals enter the system. Budget reallocation happens during active campaigns instead of after reporting periods close.

Q5. Why does transparency matter in AI-driven measurement?

Clear model logic enables finance and leadership to interpret results confidently. When stakeholders can review assumptions and challenge outputs, allocation decisions become more consistent and defensible.

Contact Darwin today for a custom SEO strategy that combines the best automation tools with proven tactics to dominate Google and AI search results.

Talk to us